Causality is NOT for the faint of heart

Why causal proof demands courage and ice in your veins — two war stories that show what's actually at stake.

We all want to know how the world works, but only a few have the courage, tenacity and integrity to do the very hard work of finding out.

Explanations are to a penny, but good explanations are very hard to come by

My girlfriend constantly says that she feels in direct competition with David Deutsch because I talk so much about how awesome he is, which always reminds me that I don’t write enough about Deutsch in the chronicle because he is so goddamn awesome.

In the video below (which you should absolutely not start watching because you won’t get back to this chronicle this month, possibly this year) I love Deutsch’s definition of explanations: “An explanation is a statement of what is there in reality, of how it works, and why.”

He continues: “explanations are to a penny, but good explanations are extremely hard to come by, and this is what the growth of knowledge is actually all about”

David Deutsch - What is Truth?

In this chronicle, I’d like to delve a little bit into one specific component of good explanations, which is the production of causal proof. Oh man.

I’m going to talk about two examples of causal proof war stories, trying to convey how causal proof is even more powerful than it seems, but also why it is so bloody hard to produce it and why so few people rise to the challenge of actually doing it.

Stories of Courage and Causality

My thesis for this chronicle is that you need integrity, and the courage of a lion, as well as have ice in your veins in order to produce causal proof. If you are a person for whom it is critical to be liked and experienced pleasant, don’t get into the business of trying to produce causal proof, and instead leave that to truly hardcore people and groups.

I am going to tell a story about ludicrously large investments into music streaming latencies, but first I will tell a story about combating child sexual abuse.

What initiatives actually protect children?

Combating child sexual abuse is one of those deeply challenging fields where everyone wants to help, yet finding truly effective interventions has been daunting. I know people who work at the World Childhood Foundation, and their mission is to protect children from exploitation and abuse. They’re driven by scientific rigor, which can often be a rare thing in fields like this, where well-meaning initiatives are plentiful, but evidence-based solutions are sparse.

Now, there are countless programs aimed at helping children. But the effectiveness of many of these, especially in reducing child sexual abuse, has historically been hard to measure or even determine. Take orphanages, for instance—a well-intentioned model funded by people worldwide. Orphanages receive massive donations, and yet, from an evidence-based perspective, they don’t serve children’s best interests. Supporting children within their families or local communities, or at the very least in family-based care, has been shown to produce better outcomes.

Now, there are countless programs aimed at helping children. But the effectiveness of many of these, especially in reducing child sexual abuse, has historically been hard to measure or even determine. Take orphanages, for instance—a well-intentioned model funded by people worldwide. Orphanages receive massive donations, and yet, from an evidence-based perspective, they don’t serve children’s best interests.

Supporting children within their families or local communities, or at the very least in family-based care, has been shown to produce better outcomes: Institutionalisation and deinstitutionalisation of children (The Lancet)

But the problem persists: without clear proof of what works specifically to reduce child sexual abuse, it’s tough to justify or attract large-scale investment in any given solution.

Recently, though, one of World Childhood Foundation’s partners - Parenting for Lifelong Health - made a breakthrough. They conducted a large (n=4800), placebo-controlled study on a parenting app aimed at under-resourced communities, designed to help parents with support, education, and practical tools. Here’s where it gets remarkable: the study revealed, well within the bounds of statistical significance, that families who used this app reported a marked reduction in sexual violence vulnerability and victimisation of children. Even better, it showed a cascade of other positive impacts, from improved family dynamics, poverty reduction and mental health improvement.

This is a remarkable feat in a space where there are extremely few initiatives that are cost-effective, can be scaled, and show a predictable effect against child sexual abuse, and it cannot be overstated how hard and unrewarding something like this is to get in place.

Halo of Indisputability

Most people that have worked in a larger organization have frustratingly noticed the effect that if you’re working on a high-priority effort, especially an urgent one, the initiative is less likely to be scrutinized for efficacy. The reasoning usually goes like this: “This cause is so important that any work towards it is automatically assumed to be good work”

In child protection, this effect is taken to its extreme. Protecting children from harm, and especially sexual abuse, is an issue that resonates so deeply and broadly, that it requires nerves of steel to raise concerns about efficacy. Even though orphanages are highly dubious compared to many other more effective efforts, pointing that out is not exactly going to make you a hoot at parties.

On a greater scale, merely creating a controlled study opens you up for being criticized for being heartless. If you believe an effort to be effective in helping families, in order to prove that, you need a control group, which gets a “placebo” effort that you know is not effective. This is the way we must do things to know things scientifically, but it is a significantly less direct, gratifying or flattering way than donating money to an orphanage. Proving that your way works is a LOT harder than just doing the status quo of what is currently accepted as being good.

A great crusade for 30 milliseconds

When I was told this story, it took me back to my time at Spotify and the studies done that led to an enormously complicated initiative called head files.

When I worked at Spotify, we rolled out so many features in A/B tests that it was ludicrous. Everyone was targeting the grand North Star Metric, which at the time was Second Week Retention (the % of new users still streaming after 14 days of signing up). We had figured out that this metric was a strong predictor that someone used Spotify basically forever, meaning that if one even nudged this metric even ever so slightly with a feature, that made Spotify a hojillion kabillion dollars (approx.)

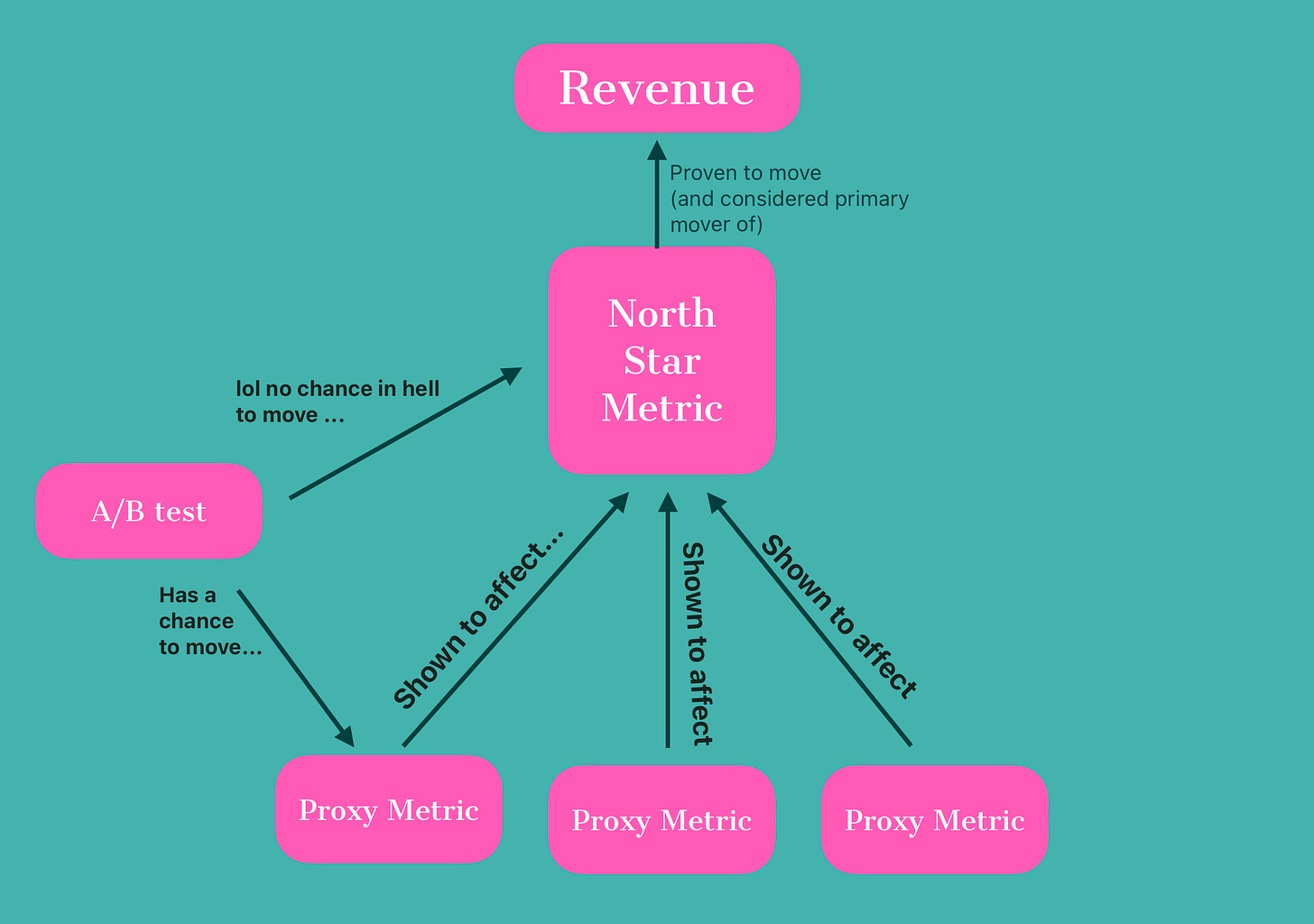

The problem was, this metric was near impossible to budge with any singular rollout, at least to a statistical significance, so A/B tests were kind of pointless towards it. In order to tackle this, the data team had set out to create proxy metrics that were more feasible to affect with an individual change to the software, but that we knew IN TURN to affect the North Star metric.

You can learn more about The North Star Framework at Amplitude but because I am the best at this stuff I have summarized everything you really need to know about the North Star framework in this chart:

In experimental ethics, can free tier users really be considered to be human?

In order to do this, the data team did something that was extensively criticized internally as unethical, which was to intentionally induce crashes and slowdowns in the client, just to test if that made users leave. Not exactly unethical enough to send one to the Hague, but arguably it was kind of torturing users for science, and Spotify's internal discussion groups were quite fiery during this era of the company.

Either way, the results of this experiment were incredible - the data team created unequivocal causal proof that three metrics were proxy effectors of the north star second week retention metric. I am drawing from memory so this might not be completely accurate but I believe they were:

Number of client crashes

Number of plays that took more than 1 second to start

Stutter during the first week

If these went up, second week retention went down. Ergo, if one does an initiative that moves these proxy metrics in a positive way, that initiative can be incredibly expensive to do, and still be easily worth it.

OMG guys someone scienced it LFG

While this might seem common sense, there is a huge difference between knowing that something is “most likely” so and “we’ve proven this causally with massive amounts of data” in how much you are willing to bet on something.

In this case, it allowed Spotify to do an absolutely ludicrously boring and expensive and ungrateful project that I do not see how it would be done otherwise. In order to get streaming latency down (and remember here that Spotify has absolutely INSANELY GOOD streaming latency as it is) we wanted to put the music on CDNs closer to the users. The problem with this is that the licenses that Spotify has with labels require song files to be individually encrypted to the user before it is sent over the wire in order to prevent privacy.

So what Spotify had to do was to go to ALL the labels (and there are a lot of them, and they are INCREDIBLY fierce negotiators) and convince them to give Spotify the first couple of thousand bytes of each song unencrypted, so that these “headfiles” could be created for every song in the entire catalog (100 million songs give or take) and replicated to CDNs all over the world. All of this had to be implemented at the very core layer of the player stack, meaning that every single client in the fleet (desktop, mobile, Sonos systems, CarPlay, playstation, etc) was affected.

The result of this effort? I think it was a 30ms latency improvement or something to that effect. It seems silly, but I strongly believe that this kind of stuff is why Spotify, as a small tech company from Sweden, could hold its own against its titan competitors. I have a yearly little ritual to try out Apple Music every year, and being used to the subtle yet extreme snappiness of Spotify brings me back every time.

And without being backed by the proxy metric causal proof that the data team had produced, there is no way such a huge effort for such a (seemingly) insignificant improvement could be justified, but knowing it, this was heroics.

And without being backed by the proxy metric causal proof that the data team had produced, there is no way such a huge effort for such a (seemingly) insignificant improvement could be justified, but knowing it, this was heroics.

This is the power of causal proof.

Mostly, the people that actually create the causal proof are unsung heroes - the praised are often the first ones that leverage the proof to confidently blaze through what was previously unknown territory.

Tonight, from my terrace in Granada, Spain, I raise a glass to you all, the unsung, ice cold, hardcore, causality creators. Thank you!

Stay Curious.