If you are born in 1983, you viscerally understand GPU Heat

Growing up with the GeForce 256 ruined free AI tiers for me — and that instinct turns out to be correct.

The Rising Costs of AI (And Why That's Actually Good)

hey 👋

I've been thinking a lot about something lately, and while this isn't technically a sponsored newsletter, it's inspired by the forces in the world of an upcoming sponsor.

When I was brainstorming on the script for the sponsor video, I ended up with a side-musing that was so interesting that it warranted further exploration in todays chronicle.

The True Cost of AI is Finally Showing

AI has started costing more lately. OpenAI just rolled out some bizarre $200/month tier that gives you more Sora credits, and honestly - I feel like AI is finally starting to cost what my neurology feels it should cost.

There is a specific reason why I'm like this - I was born in 1983, which means I started playing Quake 2 at a particular time in my life, which was formative. Kids these days take 60 FPS for granted - we had to fight with blood, sweat and tears for a smooth 30 FPS.

In my teens I bought a GeForce 256 (Ref: Nvidia GeForce 256 celebrates its 25th birthday) with money I earned from selling collected golf balls before that turned into an elaborate industry, and I installed that GPU with my own pimply hands into an ATX case.

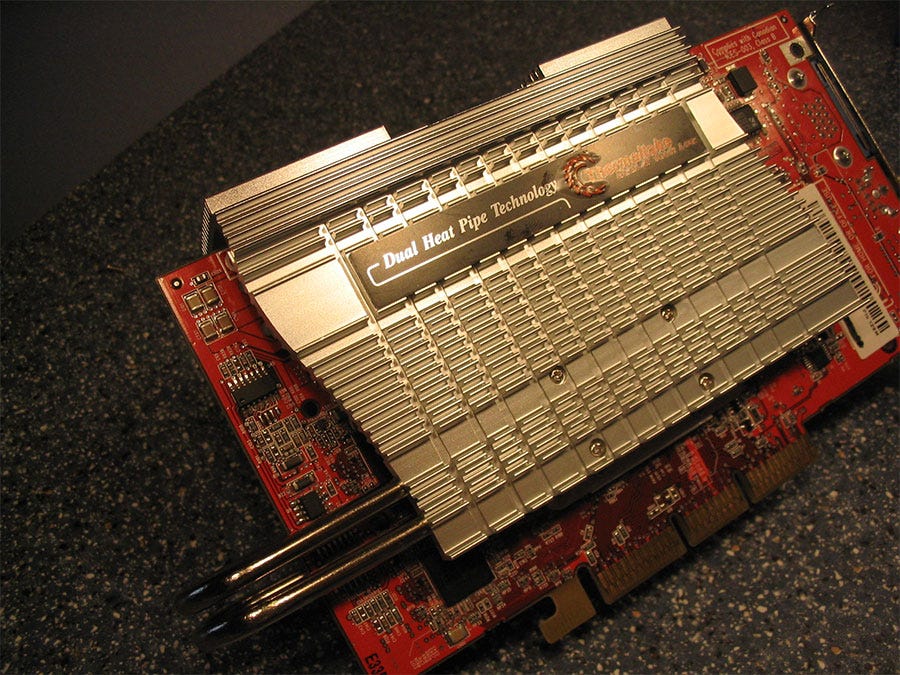

I even put custom coolers on my Geforce to squeeze out a few more frames per second in Quake 2 (I was garbage at the game, so I really needed that edge over my friends who were actually good at it).

I actually had one of these:

I don't want to pull the "I'm older and wiser than you" card (and by that I mean I totally want to pull that card), but I'm part of a generation that viscerally understands how much heat a GPU generates – it's literally fused into my childhood memories. We understood the value of these things because we had to slave away all summer to get one. And the GPUs we got? They were cheap compared to what's running in today's data centers.

The GeForce 256 was the first GPU ever, and I held that little treasure in my tiny, weak, insecure, trembling teenage hands. The value of a GPU was profound to me.

There is no such thing as free lunch GPU

So when someone with this background notices that OpenAI has a free tier? That's fishy. It's unnatural. It's a "don't take candy from strangers" situation. When somebody's giving out free candy in the city, you want to know why. That's weird AF.

The economics won't add up in a while. It's relatively well-known that Microsoft is losing $20 per $10 Copilot user. Some users even set them back $80 per month. GPUs are really, really expensive to run.

In my mind, I model (haha) large language models kinda like a sophisticated search – fancypants Googling. As developers, maybe it's a bit facetious to say that 90% of our job is Googling, but 90% of our time certainly is.

When we have LLMs built into our editors, this gets even more intense. We generate LLM requests as we type – every time code completion triggers, it now pings a large language model.

A programmer will easily make 10, maybe 100 times more requests to an LLM than the average user, like a lawyer or research scientist. We just keep pumpin' that LLM.

The case for specialised models everything

If you're using an LLM thousands of times per day, you really don't want to pay for more model than you need.

I don't want to pay compute costs for a billion parameters worth of cookbooks or the entire Library of Alexandria thousands of times per day when I'm trying to untangle some hellscape of Salesforce export modules written in 2010. I want that model to be speedy, cheap, and most importantly, not wasteful.

When was the last time you actually experienced that bigger was better? I'm personally not a huge believer in enormous monolithic LLMs like GPT and Claude. They're cool, but that $200/month pricing hints at their inefficiency problem. I'm far more interested in purpose-built models, and structures and systems that coordinate models.

One of my absolute favourites is SQL Coder, an Open Source LLM focused only on SQL – they recently released a new version with just under 70 billion parameters. For context, GPT-4 is around one trillion parameters (i.e. GPT is more than 10x the amount of parameters)

Here's the fascinating part: even though SQL Coder is so much smaller than GPT-4, it outperforms it because it's focused on one thing. It's not just better – it's also much cheaper. When you look at correctness per compute hour, which is what really matters, SQL Coder shines.

We live in the real world of time and energy. Even if I'm a wealthy developer who can throw money at the problem, there's still the issue of feedback and latency. If my autocomplete starts taking more than 100 milliseconds, that's a problem – I'm not getting feedback fast enough on my typing.

In general, to become more effective as humans, as economies, as complex adaptive systems, we tend to specialise. We don't typically succeed with big, general-purpose systems.

Sure, we might start that way – remember when we used Ruby on Rails for everything? (I loved those days!) Or ASP.NET, which, oh my god, did everything for you? There was even that era where everything web-related was done in Dreamweaver. Those were comfortable times, but we learned that specific tools for specific problems work better.

It's just how the universe works. Doctors specialise. Lawyers branch into human rights or corporate law or real estate. We don't use bazookas to kill flies.

The really interesting Context Window Assymetry Problem

Here's where we hit a really interesting challenge with AI coding platforms – the context window asymmetry.

We have these impressive LLM models like Claude with its 200k token context window. That's fantastic for research papers where you can dump in big PDFs. GPT-4 isn't far behind at 128k. Sure, it's slow and expensive when maxed out, but it works.

But for code bases? 200k tokens doesn't get you far. Take the Quake 3 engine – about 300k lines of code. At roughly 15 tokens per line, that's 22 times larger than Claude's maximum capacity. And Quake 3 is compact! Look at MySQL with its 12.5 million lines of code – that's almost 100 times larger than Claude's best context window.

Here's what gets me – On social media I keep seeing these excited posts about how fast people can prototype apps with some LLM. They're thrilled about doing something in hours that would've taken 10 times longer without the LLM.

I get it, I do use LLMs a lot, but I also kind of don't get it. As someone who's done countless prototypes, hack weeks at Spotify, startup hackathons, and side projects, I'm flabbergasted that people are THAT excited about LLMs being able to accelerate prototyping. Why would you want to automate away the best part?

Making the prototype, the first version, the MVP – that's the honeymoon phase of software development! It's pure creation, with no compromises or dependencies. It's ridiculously satisfying. Why would I pay money to skip the best part? Sure, its maybe 10 hours of work "saved", but that is ridiculously insignificant in the life of a piece of software.

The Joy of Creating vs. The Drudgery of Maintenance.

Here's a secret – in those early stages, you don't really need to pay a developer. As long as we can feed ourselves, it's pure fun. Getting paid to prototype is a delight, like playing a game.

I mean, I of course understand how it might seem overwhelming if you don't know coding, but so is painting if you've never painted. Once you know how to code, it's a joy.

Automating the prototyping MVP away is, a little bit like speed-running the puppy part of having a dog.

What we actually need help with

The real work – the actual work - what is hard - what I wouldn't do without pay – comes later.

When we have to maintain that big codebase, when customers start rolling in, when we need to handle all the edge cases, when we discover that weird concurrency bug that occurs every 14th hour... maybe... sometimes? That's the actual work.

Making an MVP isn't work – it's being in love. Being excited about automating that away is like wanting to skip the first 18 months of a relationship and jump straight into stability and moving in together.

Sure, the stable part is what is good and meaningful, but those first 18 months of being in love? That's a gift. Why would you want to remove that? Why rationalise it away? What is the actual point of that efficiency? Just because something eats up ours, does that mean that it is prudent to optimise it away, or is there another criteria for what we target for optimizing?

I don't want AI to remove the delightful work – I can handle that part myself. I want AI to help me with the drudgery. Prototyping isn't drudgery.

You know what is? Making repetitive changes across an enormous, brittle codebase that's 10 years old. That's what we need AI for.

Dreaming of Code Deletion

I won't give our upcoming sponsor away today, but I want to end with quoting their CEO:

"I dream of an AI that deletes code."

Now that is my kind of CEO - and I'll tell you why.

When I was at Spotify, we used GitHub so you could track your total code contributions.

In my last few months after giving notice, I was hell-bent on removing one of the most over-engineered pieces in the entire desktop client – a social sharing dialogue that was absurdly complex for what it did. It was my nemesis, and had been a blight in my code ownership for years. A true insult to nature, and I would not leave that monster for someone else, not even my worst enemy.

On my last day, merged a pull request that I replaced the entire thing with something that was ostensibly speaking equivalent to a small shell script (Slang background: "Go Away or I Will Replace You With a Simple Shell Script").

But point being, in that action, I removed so much code in one go that I ended up with a negative line count for my entire four-year career at Spotify. ☠️🤣

As always, stay curious 🧐🐒

Mattias Petter Johansson